Oracle CDC (Change Data Capture) for Real-time Oracle Replication

Oracle Change Data Capture better known as Oracle CDC delivers real-time changes from an Oracle source database to popular destinations like Snowflake, SQL Server, Azure Synapse, ADLS Gen2, Redshift, S3, BigQuery, Oracle, Databricks, Postgres, SingleStore, Teradata and Kafka for effective and easy consumption of data. When it comes to Oracle replication, the CDC process includes data that is inserted, updated and deleted in the right sequence, so that these changes can be replayed on the destination, in real-time or at the desired frequency. Oracle CDC eliminates the need for full extracts which can be put a huge load on the source system, can be time-consuming and may sometimes not even be possible, due to the sheer amount of data involved. Learn about Real-time Oracle Replication, step by step

Oracle CDC Replication – what you should know

- What is Oracle CDC or Oracle Change Data Capture?

- Oracle Replication Types with CDC

- Oracle CDC using Transaction Logs (Log based CDC)

- Oracle CDC 12c: Oracle Streams deprecated

- Oracle CDC 19c: Oracle LogMiner Continuous mining deprecated

- Oracle GoldenGate Replication

- Oracle RAC Replication (Real Application Clusters) CDC

- Oracle CDC for High Throughput/High Volume environments

- Oracle CDC in a catch-up scenario

- Remote Log Mining for Oracle CDC

- Why the process needs to be accurate for Oracle CDC

- Oracle CDC: Time series your data for Point-in-time Analytics

- What to look for in an Oracle Replication Tool

- The Easier Oracle Replication Tool: BryteFlow

What is Oracle CDC or Oracle Change Data Capture?

Why Oracle Database Replication needs Oracle CDC

Thousands of organizations across the world use Oracle. The Oracle database (Oracle DB) is a relational database management system (RDBMS) and is used to store different types of information like data from HR, Operations, Sales, Finance, etc. This data can be accessed and analysed to derive insights, drive ERP, for business analysis etc. However, for effective analytics, the Oracle data replicated to on-premise or on cloud platforms like S3, Redshift, Snowflake, Databricks, Teradata, Azure Synapse or SQL Server needs to be current and in sync with the source. Learn about Oracle to SQL Server Migration

About Oracle CDC

Oracle CDC (Change Data Capture) is the technology that efficiently identifies and captures data that has been added to, updated, or deleted from Oracle tables, and makes the change data available for use by destination applications. Oracle to Oracle Replication

When an application performs a transaction and that is saved, the corresponding inserts, updates or deletes to the Oracle tables can be captured. Conversely, if the transaction is not saved, then this change data is not captured. Learn about SQL Server CDC for real-time SQL Server Replication

Oracle Replication Types with CDC:

Oracle CDC using Timestamps

Audit timestamps that record the date and time when a record was inserted or updated can be used for replicating the changed records. Oracle to Azure Cloud Migration (Know 2 Easy Methods)

Disadvantages of timestamps:

- Deleted records cannot be identified – making this an unusable solution for most scenarios Oracle vs Teradata (Migrate in 5 Easy Steps)

- Queries need to be run continually on the source Oracle database and tables, making this a high overhead solution

- Audit dates and timestamp columns may not be recorded accurately by the source application, leading to incorrect replication. Timestamp and trigger issues for CDC

Using Triggers

Triggers can be defined on the tables to be replicated. These trigger off with every insert, update and delete and can be collected into another change table with the timestamps. Replication can then be done from the audit table.

Disadvantages:

- Triggers also put a load on the Oracle database increasing the overheads

Change Data Capture Types and CDC Automation

Log based Oracle CDC Using Transaction Logs

Log based CDC is by far the best Oracle CDC method as this puts minimal load on the Oracle source system and can replicate all the changes accurately. The Oracle CDC method using transaction logs, mines the Oracle redo-logs and/or the archive logs that Oracle generates for its own recovery in case of a failure. When the logs are mined, transactions can be applied on the destination, as they happened on the source, thus providing a real-time view of the data on the destination. This data can be accessed on the destination or the target which is usually a data lake or data warehouse or an analytical appliance. Kafka CDC and Oracle to Kafka CDC Methods

Data can then be consolidated across various enterprise systems and applications, to get rich insights to identify key business opportunities, get operational efficiencies, identify key business trends, and achieve a competitive advantage. Oracle CDC with transactional logs works on all Oracle versions including 12c and 19c, as it only relies on access to the Oracle redo and archive logs. However, the format of these can change drastically between different Oracle versions. AWS DMS Limitations for Oracle Replication

Oracle CDC 12c: Oracle Streams deprecated

Oracle Streams was Oracle’s native CDC tool that was provided free for Oracle databases and could be used for real-time data transfer. Oracle Streams could be used to automatically push the transactional changes to a queue, which could be utilised for replication. Oracle Streams has been deprecated from version Oracle 12c. Oracle is driving users to Oracle GoldenGate which is a paid version with high licencing costs. Oracle CDC to Kafka

Oracle CDC 19c: Oracle LogMiner Continuous mining deprecated

Oracle LogMiner is a utility provided by Oracle to purchasers of its Oracle database, provides methods of querying logged changes made to an Oracle database, principally through SQL commands referencing data in Oracle redo logs. It is primarily used by DBAs to query transaction logs. Oracle LogMiner has a feature to deliver real-time changes from Oracle redo-logs. This feature is used by most third-party CDC tools to replicate Oracle data for their customers. With 19c, Oracle has deprecated this feature as well, to (you guessed it!) – drive customers to their paid offering Oracle GoldenGate, which has high licensing costs. Connect Oracle to Databricks and Load Data the Easy Way

Oracle GoldenGate Replication

Oracle GoldenGate provides real-time, heterogenous replication from sources using CDC. It is a replication tool that is available as an add-on licensed option for Oracle’s Oracle Enterprise Edition Database or can be separately licensed for a non-Oracle database. Oracle vs Teradata (Migrate in 5 Easy Steps)

However, Oracle GoldenGate may need other Oracle licensed products in tandem to deliver efficient replication. Data replication requires table creation on the target based on table definitions at source and the need for efficient data type mapping to avoid data loss. For this you will need Oracle Data Integrator (ODI), Oracle’s Extract, Transform and Load (ETL) tool. Oracle to Oracle Replication with BryteFlow

Oracle GoldenGate will deliver CDC (Change Data Capture) replication with incremental changes merged on destination in real-time continually. High throughput, minimal impact on source systems is a hallmark of CDC. However, for big data targets like Amazon S3 or Snowflake, you need Oracle GoldenGate for Big Data. Oracle to Postgres Migration (The Whys and Hows)

To verify and reconcile data with source you will need Oracle Veridata. For a graphic user interface, Oracle GoldenGate Studio will be required. For easy alerting and monitoring capabilities you will need Oracle GoldenGate Management Pack (plugged into Oracle Enterprise Manager). All these products need to be licensed separately so using Oracle GoldenGate can be a very expensive proposition, not to mention the integration between these various products that can lead to increased time and cost. Oracle to Azure Cloud Migration (Know 2 Easy Methods)

Oracle GoldenGate needs the XStream API which means additional licensing

Oracle XStream API enables high throughput, real-time CDC from Oracle DB and GoldenGate requires XStream to provide real-time CDC from an Oracle 19c database. It is useful for engineers who need high-speed, low-level access to database logs for transactional database replication. However with BryteFlow, you do not need additional licensing for the Oracle GoldenGate XStream module. Instead BryteFlow gets real-time data from Oracle 19c redo logs without using the licensed Oracle GoldenGate XStream product and is more performant and cost effective. Bryteflow for Oracle Replication

Oracle RAC Replication (Real Application Clusters) CDC

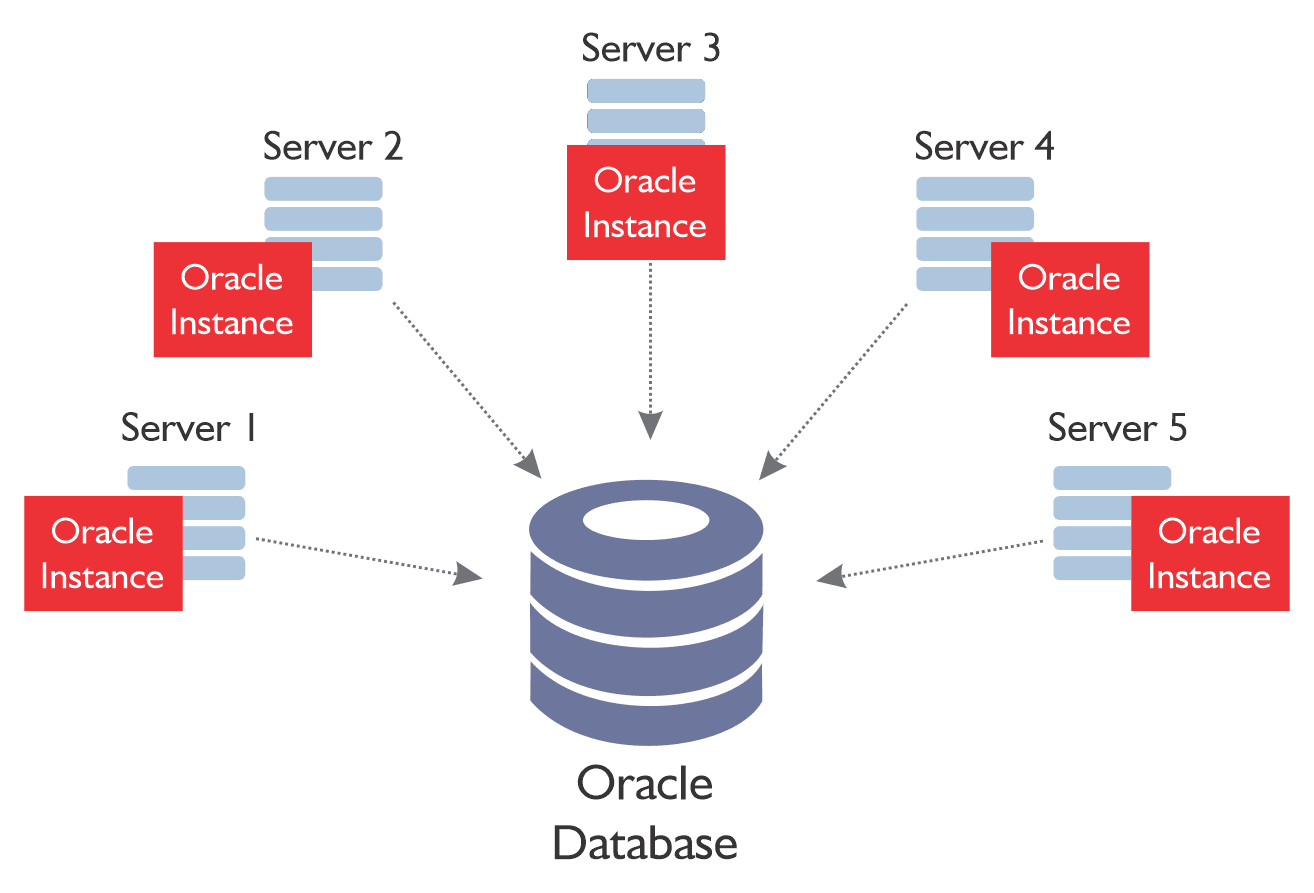

Oracle Real Application Clusters (RAC) provides a highly available and scalable Oracle database environment. It allows customers to run a single Oracle database across multiple servers while accessing shared storage. Oracle CDC to Databricks

If a server in the cluster fails, the database instance will continue to run on the remaining servers or nodes in the cluster. This also means that customers can start small and add nodes or servers as necessary. Oracle CDC to Teradata

The interesting aspect for Oracle CDC for an Oracle RAC environment is that every single server/node creates a unique transaction log, recognized by thread identifier and an incremental series. The logs must be read and applied in the sequence they are generated by the servers and then pieced together. This adds a significant level of complexity to the Oracle CDC process. Connect Oracle to Databricks and Load Data the Easy Way

Oracle CDC for High Throughput/High Volume environments

Some Oracle environments follow patterns, where a bulk of the transactions come rapidly for a few hours in a day and then resume at a normal pace again. The challenge with processing in these environments is that the CDC process needs to be able to handle the high throughput spikes and scale appropriately so that replication happens at the same speed as when the system is quieter. Most CDC tools will see significant latency in processing CDC in these high throughput environments. Oracle to SQL Server Migration: Reasons, Challenges and Tools

Oracle CDC in a catch-up scenario

When there is a difficulty accessing the Oracle database due to network issues or some other issue, replication cannot be performed, and there is a huge backlog of transactions that need to be replicated once the issue is resolved. Catch up needs to be done as if CDC was operating on a “high throughput” environment described in the point above. If not, the current transactions will be delayed significantly, or worse, may never catch up! Add the complexity of a RAC environment to this, and you have a potential data replication nightmare on your hands. Oracle vs Teradata (Migrate in 5 Easy Steps)

Remote Log Mining for Oracle CDC

This refers to a scenario when the Oracle source system is overloaded. In this situation any extra process, and even the slightest load may prove to be a risk to the operational performance of the Oracle source database. The only option may be to mine the log files remote from the Oracle source database. The logs can be mined on a completely different server therefore there is zero load on the source. Oracle to Postgres Migration

Why the process needs to be accurate for Oracle CDC

When an application performs a transaction, that is saved, the corresponding change data is generated in the associated underlying Oracle tables. When performing CDC, it is good to maintain referential integrity i.e. take all the change data across for the transaction. If replicated only partially, it may lead to referential integrity issues on the destination. Source to Target Mapping Guide (What, Why, How)

Moreover, the Oracle CDC process may be running without any errors and delivering data with acceptable latency, but how would you know that every change is being replicated to the destination? How would you know that the data replicated is accurate and trustworthy? Postgres to Oracle with BryteFlow

Oracle CDC: Time series your data for Point-in-time Analytics

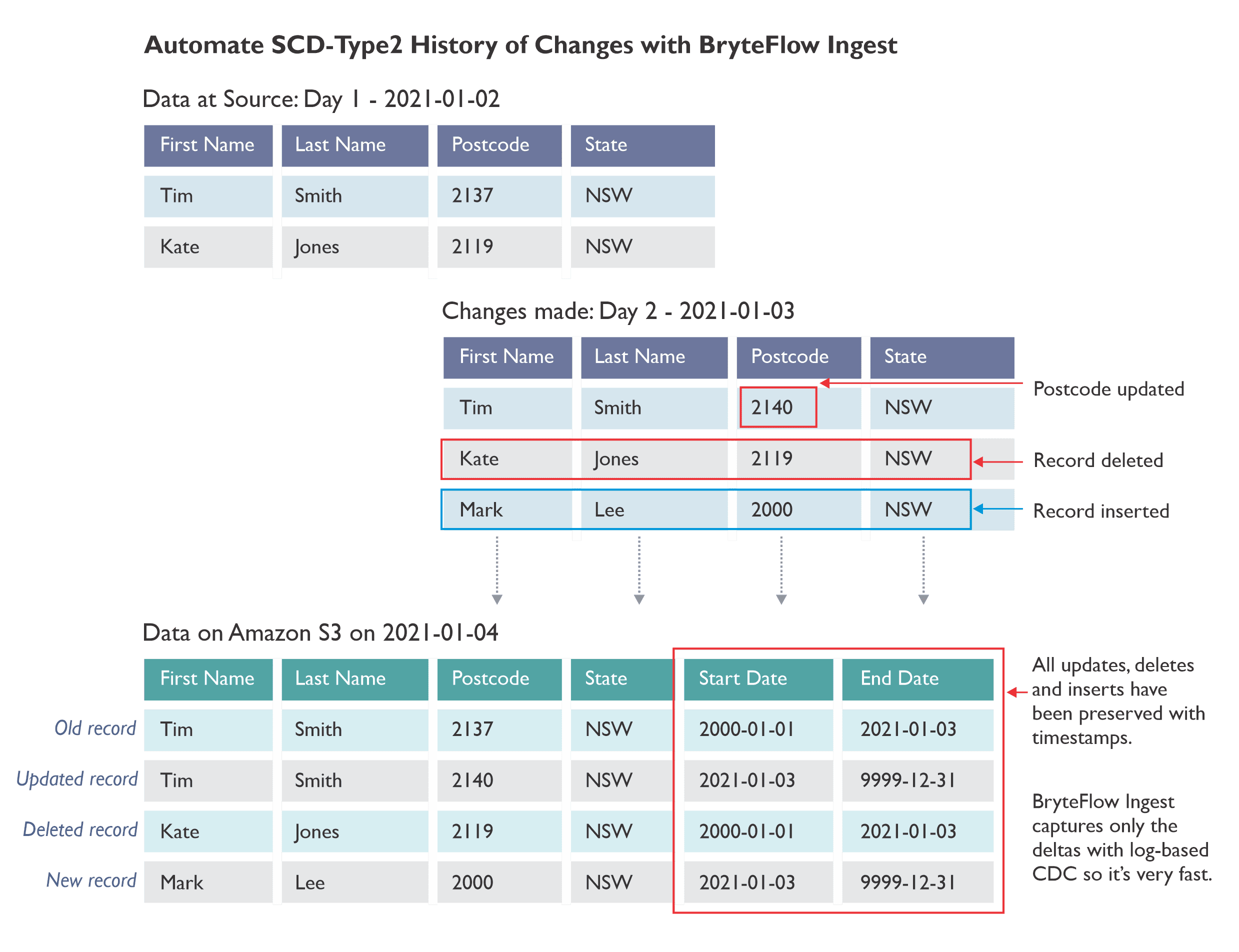

Sometimes for analytics point-in-time queries are particularly important on the destination data lake or data warehouse. It is also important that the SCD type2 history timestamps have the commit date from the Oracle source, or the timestamp that the records were saved to Oracle with, rather than the timestamp when they are loaded to the destination. If commit timestamp is not used in the SCD type 2 history generation, then any delays in the loading of data can adversely affect the point-in-time analytics. Oracle to Snowflake: Everything You Need to Know

What to look for in an Oracle Replication Tool

An automated Oracle Replication Tool can be infinitely faster and easier than hand-coding. Hand-coding an Oracle CDC solution can be extremely challenging. Maintaining a hand-coded solution for future versions of Oracle can also pose serious problems. Hence, using an Oracle replication tool is an easier solution to consider. Oracle to Snowflake: Everything You Need to Know

When selecting an Oracle replication tool, these are some points to consider: Is the tool going to work with Oracle 12c, and Oracle 19c when you upgrade? Can it handle high throughput; can it catch up and recover in an outage scenario and handle large CDC volumes easily; can it work remotely and can it deliver data that is accurate, trustworthy and real time? Oracle to Azure Cloud Migration (Know 2 Easy Methods)

BryteFlow’s Unique Architecture for Oracle CDC

The Easier Oracle Replication Tool: BryteFlow

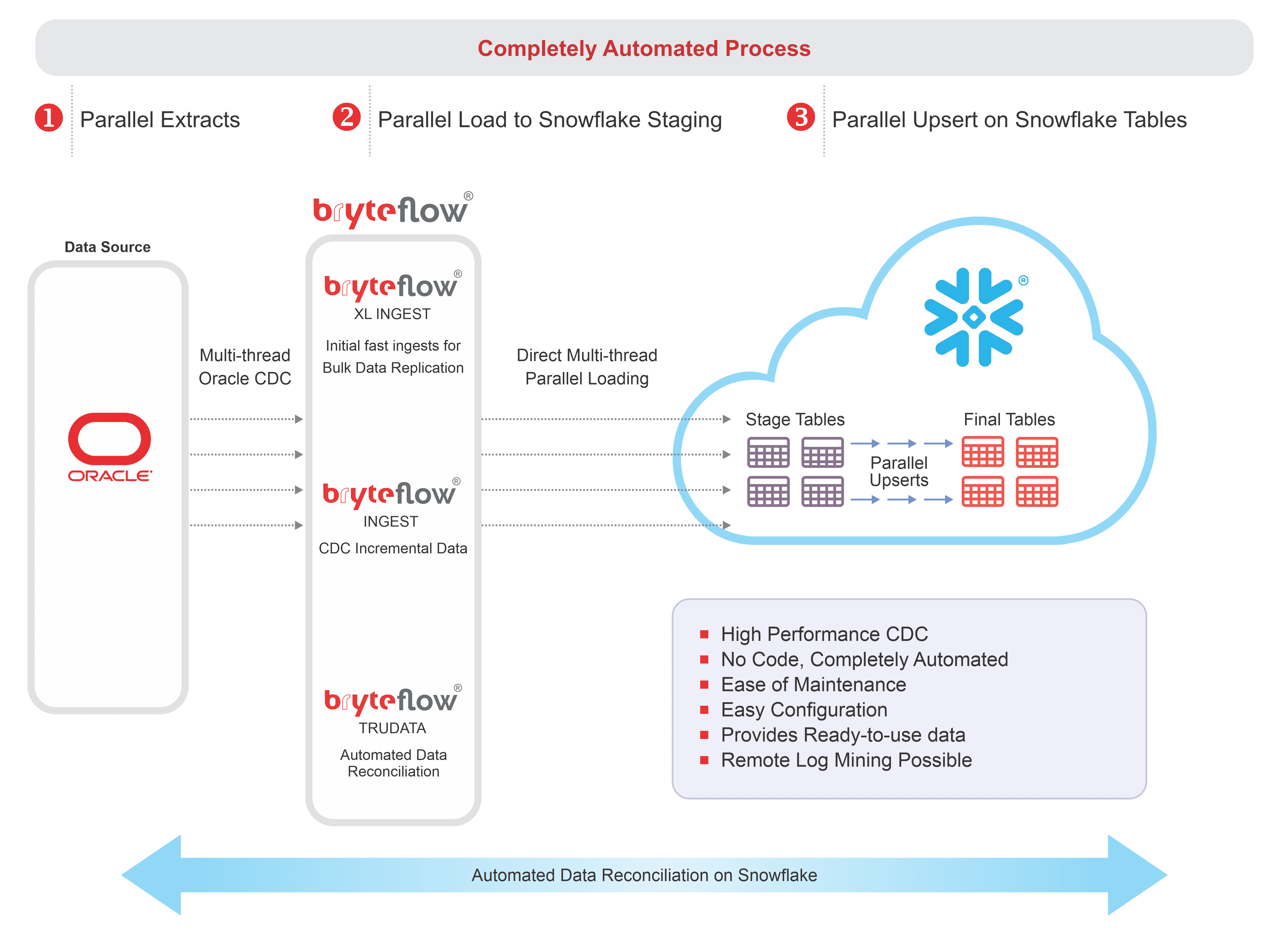

- BryteFlow is an Oracle replication tool that can help you with all the points outlined earlier. It is automated, real-time and codeless, making the Oracle CDC process effortless, scalable, highly performant and accurate. Oracle vs Teradata (Migrate in 5 Easy Steps)

- Oracle data replication is future-proof, even when you update your Oracle versions. It does not need any cumbersome, hard-to-manage agents on the Oracle source or destination.

- BryteFlow does not need the GoldenGate XStream module (saves on additional licensing costs) to enable real-time Oracle CDC. It gets real-time data from Oracle 19c redo logs with its own log parser tool. BryteFlow as an alternative to GoldenGate

- It works with Oracle RAC environments effortlessly. Debezium CDC Explained and a Great Alternative CDC Tool

- It works with high throughput environments and catch-up situations by mining logs in parallel and hence scaling the CDC process replication to the destination or target. Successful Data Ingestion (What You Need to Know)

- It can be made to work completely remotely, and not access the source Oracle database at all.

- For initial synchronisation of data to the destination, it can run parallel ingestion threads, using smart parallel partitioning algorithms to quickly get the data to the destination. Postgres to Oracle with BryteFlow

- It maintains referential integrity by taking all commit data synchronised for the replication frequency. Oracle to Snowflake: Everything You Need to Know

- It has an inbuilt ability to do data reconciliation between source and destination, with counts and checksums, and the ability to repair or correct data that does not reconcile, without doing an initial extract.

- It builds automated SCD type2 history on the destination with the commit timestamp in Oracle. Any constantly updated audit source fields can be removed from replication, so that the changes captured are true changes on the source. Postgres CDC (6 Easy Methods to Capture Data Changes)

- BryteFlow is a blisteringly fast Oracle replication tool replicating over 1,000,000 rows in just 30 seconds and at least 6x faster than Goldengate.

- BryteFlow provides replication support for all Oracle versions, including Oracle 12c, 19c, 21c and future releases for the long term.