Snowflake Integration in Real-time without Coding

What is Snowflake Data Warehouse?

The Snowflake Data Warehouse or Snowflake as it is popularly known, is a cloud data warehouse. The Snowflake cloud data warehouse enables data storage and analytics on a dynamic, highly scalable platform in the cloud.

Data Integration for Snowflake AWS and Snowflake Azure

BryteFlow can help you deploy your real-time Snowflake Data Lake or Data Warehouse on AWS and Azure platforms fast and without coding. BryteFlow uses enterprise log-based change data capture on legacy databases like Oracle, SQL Server, SAP, PostgreSQL and MySQL, and from applications like Salesforce etc. to move data from the sources to the Snowflake data warehouse in real-time. It maintains a replica of the source structures in Snowflake and merges the initial and delta loads automatically with SCD type 2 history if required. How to move terabytes of data to Snowflake fast

The fastest way to move your data is with BryteFlow’s log-based Change Data Capture to Snowflake

SQL Server to Snowflake in 4 Easy Steps

Transform your data with Snowflake ETL

Databricks vs Snowflake: 18 differences you should know

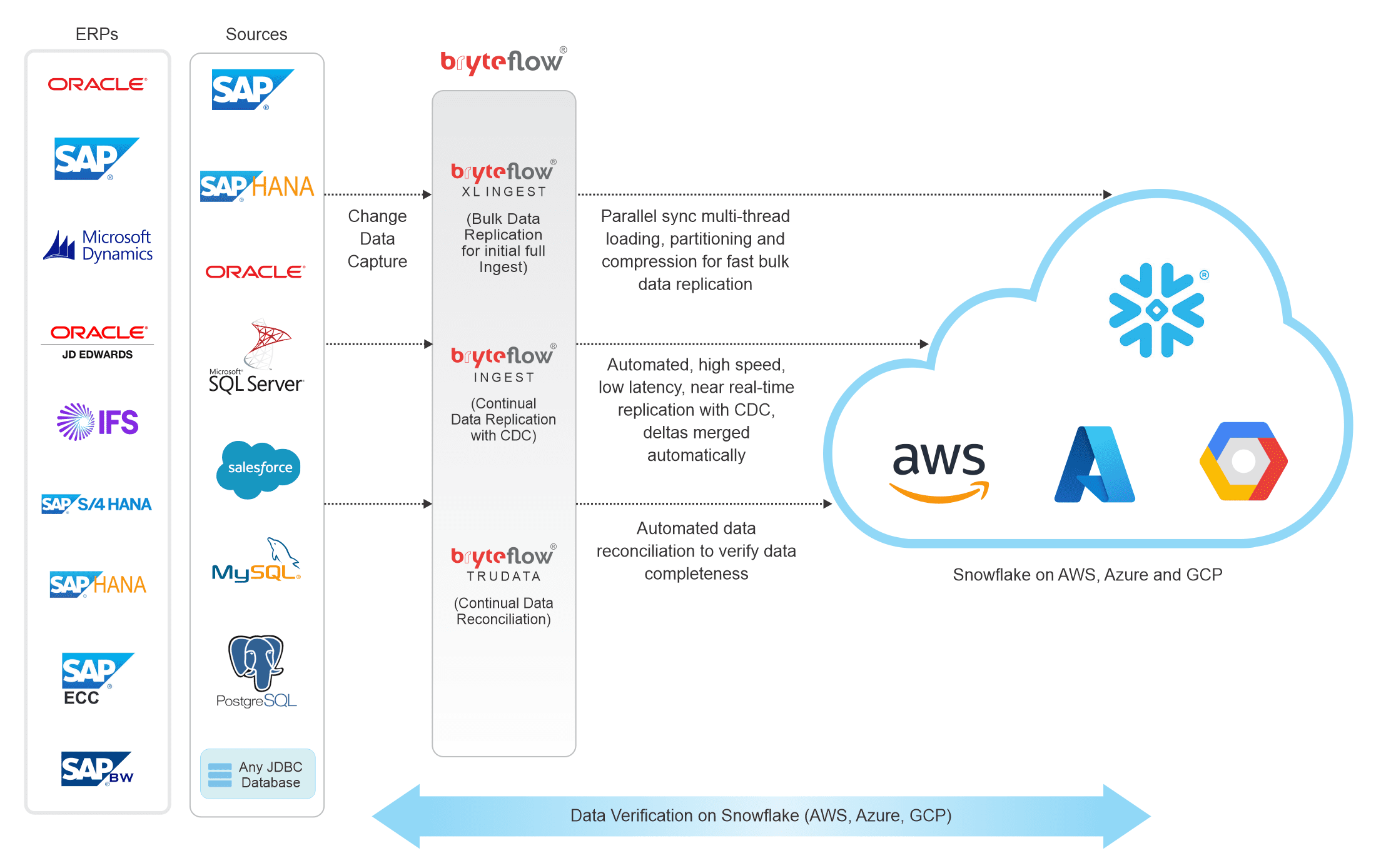

BryteFlow’s Technical Architecture

How BryteFlow works with the Snowflake Data Warehouse

BryteFlow provides Snowflake Integration in real-time. Here’s what you can do with BryteFlow on your Snowflake data warehouse. Get a Free Trial of BryteFlow

Change Data Capture your data to the Snowflake data warehouse with history of every transaction

BryteFlow continually replicates data to Snowflake in real-time, with history intact, through log based Change Data Capture. BryteFlow Ingest leverages the columnar Snowflake database by capturing only the deltas (changes in data) to Snowflake keeping data in the Snowflake database synced with data at source.

SQL Server to Snowflake in 4 Easy Steps

Data is ready to use – Get data to dashboard in minutes

BryteFlow Ingest on Snowflake provides a range of data conversions out of the box including Typecasting and GUID data type conversion to ensure that your data is ready for analytical consumption or for Machine Learning purposes.

Load data into Snowflake with speed and performance

BryteFlow Ingest uses fast log-based CDC to replicate your data to the Snowflake data warehouse. Data is transferred to the Snowflake database at high speeds in manageable chunks using compression and smart partitioning.

Automated DDL and performance tuning in the AWS-Snowflake environment

BryteFlow helps you tune performance on the AWS-Snowflake environment by automating DDL (Data Definition Language) which is a subset of SQL.

Snowflake S3 integration from BryteFlow

You have the choice of transforming and retaining data on AWS S3 and pushing it selectively to Snowflake – for multiple use cases including Analytics and Machine Learning. Or replicating and transforming data directly on the Snowflake data warehouse itself. More about Snowflake ETL

Prepare and load data from S3 to Snowflake for faster performance

BryteFlow frees up the resources of the Snowflake data warehouse by preparing your data on Amazon S3 and only pushing the data you need for querying onto Snowflake. Databricks vs Snowflake: 18 differences you should know

Save on storage costs with Snowflake S3 integration

You can choose to save all your data on Amazon S3 where typically storage costs are much lower. On the Snowflake data warehouse you need to only pay for the resources you actually use for the compute – this can translate to a large savings on data costs. This also enhances the performance of the Snowflake cluster. Here’s Why You Need Snowflake Stages (Internal & External)

Automated Data Reconciliation on the Snowflake data warehouse

You are assured of getting high quality, reconciled data always with BryteFlow TruData, our data reconciliation tool. BryteFlow TruData continually reconciles data in your Snowflake database with data at source. It can automatically serve up flexible comparisons and match datasets of source and destination.

Ingest bulk data automatically to your Snowflake database with BryteFlow XL Ingest

If you have huge petabytes of data to replicate to your Snowflake data warehouse, BryteFlow XL Ingest can do it automatically at high speed in a few clicks. BryteFlow XL Ingest has been specially created to cater for the replication of large data sets with tables over 50 GB to your Snowflake database. How to load data into Snowflake fast

Dashboard to monitor data latency and status of data ingestion on Snowflake data warehouse

Stay on top of your data ingestion to the Snowflake database with the BryteFlow ControlRoom. It gives you the specifics of your Snowflake data including latency, operation start time, operation end time, volume of data ingested and data remaining.

Data transformation with data from any database, incremental files or APIs

BryteFlow Blend our data transformation tool enables you to merge and transform data from virtually any source including any database, any flat file or any API for querying on Snowflake. Transform your data with Snowflake ETL

Data migration from Teradata and Netezza to the Snowflake database

BryteFlow can migrate your data from data warehouses like Teradata and Netezza to your Snowflake data warehouse with ease in case you need to shift your data.

Get built-in resiliency for Snowflake integration

BryteFlow has an automatic network catch-up mode. It just resumes where it left off in case of power outages or system shutdowns when normal conditions are restored. This is ideal for Snowflake’s big data environment which routinely handles data ingestion and preparation of thousands of petabytes of data.

Save on Snowflake data costs

BryteFlow data replication uses very low compute so you can reduce Snowflake data costs. Cut Snowflake costs by 30%

Why use the Snowflake Cloud

Data Warehouse?

Snowflake the cloud data warehouse is based on SQL and is easy to use.

Data can be queried with standard SQL query language

The Snowflake database was designed as a fully functioning SQL database. The Snowflake SQL database is a columnar-stored relational database that works with Excel, Tableau and other common software. Snowflake data can be queried with standard SQL query language. Data analysts are extremely familiar with SQL and can get started on analytics fast.

Snowflake is a highly automated SaaS offering

Your Snowflake SQL database will not require expensive hardware or software to be installed or configured. The custom-built Snowflake big data infrastructure is fully managed and maintained by Snowflake. Databricks vs Snowflake: 18 differences you should know

The Snowflake data warehouse is highly dynamic and scalable

You don’t need to worry about the size of your Snowflake database. The Snowflake data warehouse is highly dynamic and scalable. Snowflake’s big data architecture is shared and multi-cluster with each cluster accessing data and running independently without conflict. This is ideal for running large queries and operations simultaneously. Here’s Why You Need Snowflake Stages

Snowflake data is extremely secure

The Snowflake data warehouse automatically encrypts all data. Multi-factor authentication and granular access control is reassuring too. The Snowflake cloud data warehouse uses third party certification and validation to make sure security standards are met. Access control auditing is available for everything including data objects and actions within your Snowflake database.