Automated PostgreSQL replication to Snowflake

Why you should use BryteFlow for PostgreSQL migration to Snowflake

Our PostgreSQL migration to Snowflake is as simple as it can get. BryteFlow’s automated data replication moves your PostgreSQL data to Snowflake in real-time with just a few clicks and requires absolutely no coding. You are freed from all the hassle of retrieving data from relational databases by writing SQL queries. Build a Snowflake Data Lake or Snowflake Data Warehouse

PostgreSQL replication to Snowflake with log based Change Data Capture

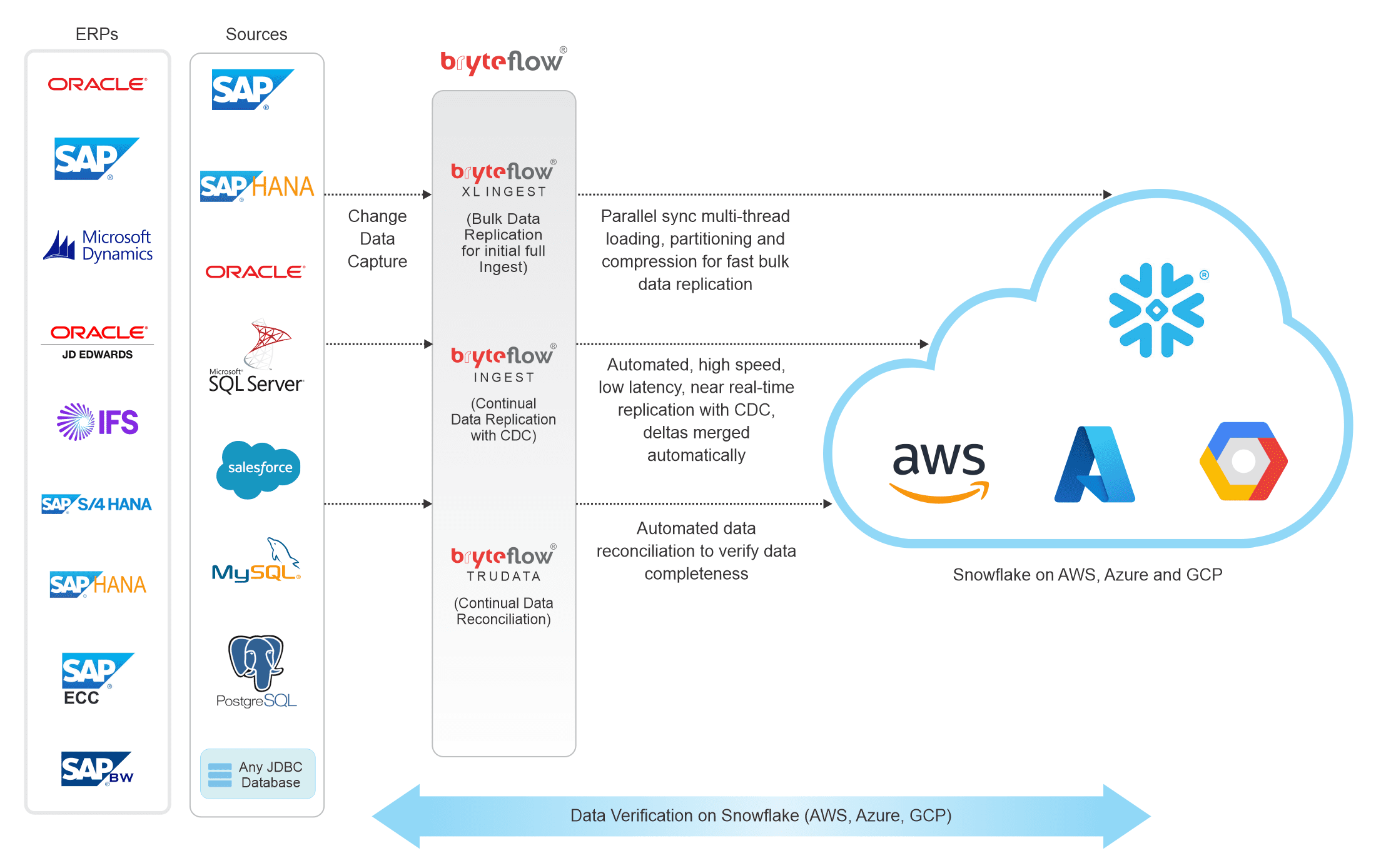

BryteFlow replicates your data from PostgreSQL to Snowflake using Change Data Capture and refreshes it continually in almost real-time to keep up with changes at source. BryteFlow’s easy point and click interface and built-in automation makes data replication to Snowflake a breeze. Just a few clicks to connect and you can begin to access prepared data in Snowflake for analytics. You can merge data from different sources with your PostgreSQL data and transform it to a consumable format for Snowflake. You can be assured of the trustworthiness of your data since it is automatically reconciled with data at source and our automated reconciliation tool provides alerts if data is missing or incomplete. Postgres Replication Fundamentals You Need to Know

Get a Free Trial of BryteFlow

Replication of your Postgres database to Snowflake – highlights

- Low latency, log based CDC replication of your Postgres database with minimal impact on source.

- Optimised for Snowflake. Transform data with ETL in Snowflake

- No coding needed, automated interface creates exact replica or SCD type2 history on Snowflake.

- Manage bulk data ingests easily with parallel loading and automated partitioning mechanisms for high speed.

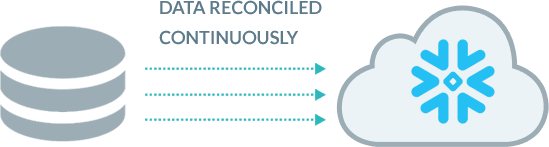

- Automated data reconciliation to validate completeness of data.

How to load terabytes of data to Snowflake fast

Snowflake CDC With Streams and a Better CDC Method

Transform your data with Snowflake ETL

Codeless, fast PostgreSQL replication to Snowflake

Data replication tool with support for fast bulk data ingestion, replicates data from Postgres to Snowflake in minutes

When your data tables are true Godzillas, including Postgres data, most data replication software roll over and die. Not BryteFlow Ingest. It tackles terabytes of data for replication head-on. It’s sister program BryteFlow XL Ingest has been specially created to replicate large datasets using smart partitioning and multi-thread parallel loading for super-fast initial ingests.

How much time do your Database Administrators need to spend on managing the replication?

You need to work out how much time your DBAs will need to spend on the solution, in managing backups, managing dependencies until the changes have been processed, in configuring full backups and then work out the true Total Cost of Ownership (TCO) of the solution. The replication user in most of these replication scenarios needs to have the highest sysadmin privileges.

With BryteFlow, it is “set and forget”. There is no involvement from the DBAs required on a continual basis, hence the TCO is much lower. Further, you do not need sysadmin privileges for the replication user.

PostgreSQL replication to Snowflake is completely automated

Most PostgreSQL data tools will set up connectors and pipelines to replicate your Postgres database to Snowflake but there is usually coding involved at some point for e.g. to merge data for basic PostgreSQL CDC. With BryteFlow you never face any of those annoyances. Data replication, data merges, SCD Type2 history, data transformation and data reconciliation are all automated and self-service with a point and click interface that ordinary business users can use with ease.

Is your data from PostgreSQL to Snowflake monitored for data completeness from start to finish?

BryteFlow provides end-to-end monitoring of data. Reliability is our strong focus as the success of the analytics projects depends on this reliability. Unlike other software which set up connectors and pipelines to PostgreSQL source applications and stream your data without checking the data accuracy or completeness, BryteFlow makes it a point to track your data. For e.g. if you are replicating PostgreSQL data to Snowflake at 2pm on Thursday, Nov. 2019, all the changes that happened till that point will be replicated to the Snowflake database, latest change last so the data will be replicated with all inserts, deletes and changes present at source at that point in time.

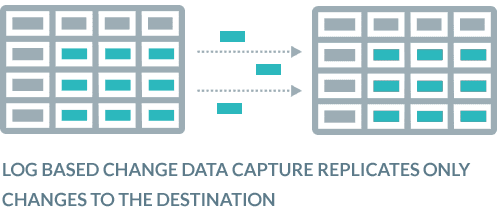

Does your data integration software use time-consuming ETL or efficient PostgreSQL CDC to replicate changes?

Very often software depends on a full refresh to update destination data with changes at source. This is time consuming and affects source systems negatively, impacting productivity and performance. BryteFlow uses PostgreSQL CDC to Snowflake which is zero impact and uses database transaction logs to query PostgreSQL data at source and copies only the changes into the Snowflake database. The data in the Snowflake data warehouse is updated in real-time or at a frequency of your choice. Log based CDC is absolutely the fastest, most efficient way to replicate your PostgreSQL data to Snowflake.

Your data maintains Referential Integrity

With BryteFlow you can maintain the referential integrity of your data when replicating data from Postgres to Snowflake. What does this mean? Simply put, it means when there are changes in the PostgreSQL source and when those changes are replicated to the destination (Snowflake) you can put your finger exactly on the date, the time and the values that changed at the columnar level.

Continual, automated data reconciliation in the Snowflake cloud data warehouse

With BryteFlow, data in the Snowflake cloud data warehouse is validated against data in the Postgres database continually or you can choose a frequency for this to happen. It performs point-in-time data completeness checks for complete datasets including type-2. It compares row counts and columns checksum in the PostgreSQL database and Snowflake data at a very granular level. Very few data integration software provide this feature.

An option to archive data while preserving SCD Type 2 history

BryteFlow provides an option to archive data. It provides time-stamped data and the versioning feature allows you to retrieve data from any point on the timeline. This versioning feature is a ‘must have’ for historical and predictive trend analysis.

Can your data get automatic catch-up from network dropout?

If there is a power outage or network failure will you need to start the PostgreSQL to Snowflake data replication over again? Yes, with most software but not with BryteFlow. You can simply pick up where you left off – automatically.

Merge and transform PostgreSQL data with data from other sources

With BryteFlow you can merge and transform any kind of data from multiple sources on S3 (if you are on AWS) with your data from PostgreSQL for Analytics or Machine Learning.

Is the data replication tool faster than GoldenGate?

BryteFlow data replication definitely is. This is based on actual experience with a client and not an idle boast. Try out BryteFlow for yourself and see exactly how fast it works to migrate your PostgreSQL data to Snowflake. Get a Free Trial of BryteFlow

About PostgreSQL Database

PostgreSQL is an open source database that supports SQL (relational) and JSON (non-relational) queries. It is an advanced, relational enterprise-class database that can be used as a foundational database for web, mobile and analytics applications. PostgreSQL is known for its data integrity, architecture, robust feature set, reliability, and extensibility. PostgreSQL combines the SQL language with multiple features that can store and scale complex data workloads safely. It is a highly stable database that has more than 20 years of development by the open source community behind it.

About Snowflake Data Warehouse

The Snowflake Data Warehouse or Snowflake as it is popularly known is a cloud based data warehouse that is extremely scalable and high performance. It is a SaaS (Software as a Service) solution based on ANSI SQL with a unique architecture. Snowflake’s architecture uses a hybrid of traditional shared-disk and shared-nothing architectures. Users can get to creating tables and start querying them with a minimum of preliminary administration.